If you’ve been hunting for an AI writing tool that actually preserves your voice across a novel-length project, you’ve probably hit the same wall every other writer has. You paste your writing in. You ask for a rewrite. You add the magic phrase: preserve my voice. The model returns something competent, polished, sometimes objectively better. And it isn’t yours.

A new Berkeley paper just explained why this happens, regardless of which tool you’re using. The drift is structural. No prompt formulation fixes it. A different architectural approach does, and that’s what bookmoth is built on.

Earlier this month, fantasy author Lena McDonald published Darkhollow Academy: Year 2. Inside the published book, readers found this sentence stranded in the prose:

“I’ve rewritten the passage to align more with J. Bree’s style, which features more tension, gritty undertones, and raw emotional subtext beneath the supernatural elements.”

McDonald had pasted her editing prompt directly into her manuscript and missed it on copy-edit. The internet did the rest. The story was reported as another disclosure scandal: another author caught using AI without saying so.

The scandal underneath the scandal is more interesting. The prompt itself worked. McDonald asked the model to mimic another writer’s voice, and it did, well enough that the only reason any reader noticed was the clipboard slip. The defense against AI flattening or replacing your voice in 2026 is a writer’s discipline. The actual mechanism for preserving voice doesn’t exist in most of the tools we currently use.

Except in one category. The Berkeley research explains both the failure and the alternative.

Why does AI writing flatten your voice even when you tell it not to?

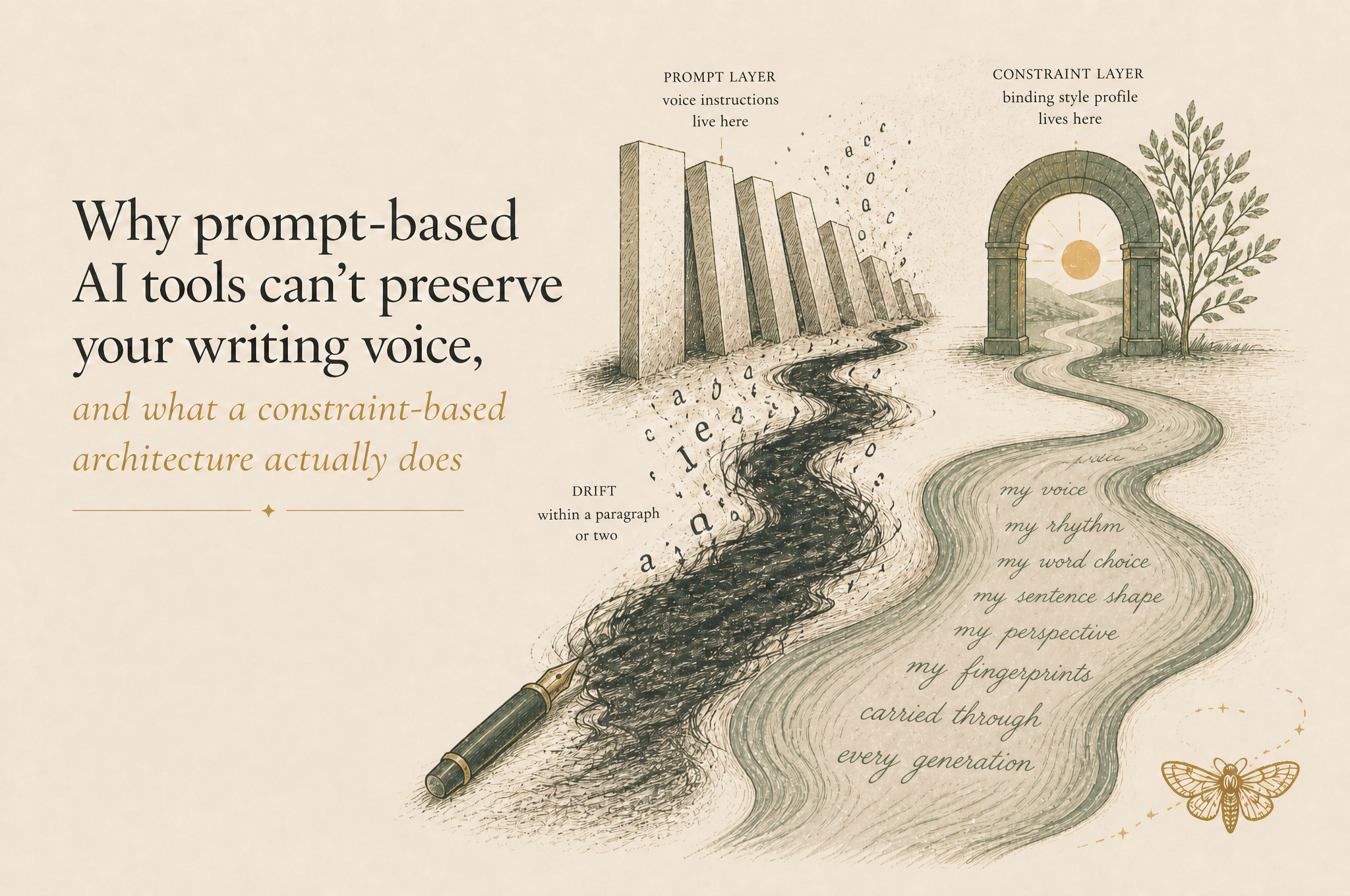

AI writing tools flatten your voice because voice instructions live at the prompt layer of the model, where the post-training distribution overrides them within a paragraph or two. Telling the model to “preserve voice” works for a sentence. It doesn’t survive a chapter.

Here’s the mechanism. Frontier language models like Claude, ChatGPT, and Gemini are post-trained on massive amounts of edited prose. The post-training process selects for what professional editors prefer: cleaner sentences, more standard vocabulary, more elaborate punctuation, greater distance from raw first-person voice. That preference becomes the model’s central voice.

When you prompt the model to revise your writing while preserving your voice, you’re asking it to suppress its own central voice in favour of yours. It can do that for a sentence or two. The post-training distribution still pulls every generation back toward the centre. By the third or fourth sentence the central voice is creeping back. By the third or fourth paragraph the voice you started with is gone.

This is the same dynamic behind the active complaint about Claude Opus 4.7 across writer communities. The “memo voice” complaint, the “reaches for bullet points” complaint, the “feels like getting an email instead of a thoughtful colleague” complaint, are all the surface manifestation of the same architectural drift. It isn’t user error. It isn’t bad prompts. It’s the architecture, and it has nothing to do with which model you choose.

What did the Berkeley study actually prove about AI revision?

A 2026 Berkeley study by Tom van Nuenen measured thirteen stylometric markers across three frontier language models in three prompt conditions, including the explicit “preserve voice” instruction. Every model in every condition drifted in the same direction. Voice-preserving prompts only reduced the magnitude of the drift, not the direction.

The setup was clean. Van Nuenen took a corpus of 300 first-person narratives. He sent each through three frontier large language models. He prompted under three conditions: a generic “improve this” instruction, a generic “rewrite this” instruction, and an explicit “revise this while preserving the original voice” instruction.

He measured thirteen stylometric markers in both the input and the output: function words, contractions, first-person pronouns, vocabulary diversity, average word length, punctuation patterns, emotion words, and others. The markers are the same kind of multi-axis fingerprint that allowed Claude Opus 4.7 to identify journalist Kelsey Piper from 125 unpublished words a couple of weeks ago, just measured directly rather than inferred.

The finding: every model, every condition, drifted in the same direction. Fewer function words in the output. Fewer contractions. Fewer first-person pronouns. Greater vocabulary spread. Longer words. More elaborate punctuation. The shift moved from embedded narration toward distanced narration. The drift was identical across “improve,” “rewrite,” and “preserve voice.” The voice-preserving prompt only reduced the magnitude of the drift. It did not change the direction.

In plain language: every AI revision prompt makes prose more polite, more formal, more eager to please, slightly distant from the writer who started the sentence. Even the prompt that says don’t.

[Source: van Nuenen, “Voice Under Revision: Large Language Models and the Normalization of Personal Narrative,” arxiv 2604.22142, submitted April 24, 2026.]

Can Sudowrite, NovelCrafter, Claude, or ChatGPT actually preserve your voice?

No, not for long-form work. Sudowrite, NovelCrafter, and base Claude or ChatGPT all rely on prompt-level voice instructions. The Berkeley study demonstrates this entire architectural layer fails systematically. The drift is identical regardless of which prompt-based AI writing tool you use.

It’s worth being specific about why each of these tools falls in the same category, because writers often switch between them looking for the one that doesn’t drift.

Sudowrite provides Codex (a structured world/character bible), style descriptions, and sample passages. All of these feed into the model as prompt scaffolding. They’re more sophisticated than a bare chat interface, but they sit at the same architectural layer, and Sudowrite’s Muse model still drifts within a chapter for the same structural reason van Nuenen identified.

NovelCrafter provides BYOK access to multiple frontier models, plus a Codex similar to Sudowrite’s. The model selection helps marginally (some models drift slightly less than others) but doesn’t change the architecture. Voice instructions still live at the prompt layer.

Base Claude or ChatGPT with custom instructions, projects, or pasted samples is the prompt layer in its purest form. The Berkeley study used this exact setup and demonstrated unambiguously that it fails.

The pattern is consistent because the architecture is consistent. Looking for the right Sudowrite alternative or NovelCrafter alternative within the prompt-based category is solving the wrong problem. The category itself has the structural ceiling van Nuenen measured.

What kind of AI writing tool actually preserves your voice across a novel?

The category that works is constraint-based, not prompt-based. AI writing tools that compile your writing samples into a structured style profile and apply it as a binding constraint on every generation can hold voice across chapters, where prompt-based tools cannot. bookmoth is the working implementation of this architecture for novelists and long-form writers.

The architectural distinction matters because it’s the difference between voice surviving and voice drifting.

In a prompt-based tool, you describe your voice (or paste samples of it) into a text field, and that description gets fed to the model alongside the user’s request. The model uses the description to anchor the first sentence or two of its output. After that, the post-training distribution pulls the generation back toward the model’s central voice. Van Nuenen’s paper proved this happens reliably across all three models he tested.

In a constraint-based tool, the architecture is fundamentally different. Your writing samples are analysed before any drafting begins, your voice is extracted into a structured style profile (rhythm, syntax, diction, structural tendencies, dialogue register), and that profile is applied as a binding rule on every generation. Not as a prompt parameter that can be overridden. As a constraint that governs every output. The voice instruction never lives in the model’s instruction layer where the post-training distribution can pull it off course.

bookmoth is built on this constraint-based architecture specifically for novelists and long-form writers. It also locks the underlying model to Claude Opus 4.6 rather than 4.7, because 4.6’s central voice is less entrenched and therefore easier to deviate from under constraint pressure. The combination is what allows voice preservation across an entire novel rather than across a paragraph.

There are a small number of other tools approaching the same architectural idea from different angles. Together they form the category that survives van Nuenen’s findings, because they’re not doing the thing the paper proves doesn’t work.

How does the constraint-based architecture work in practice?

A constraint-based AI writing tool reverse-engineers your voice from samples you’ve already written, compiles those patterns into a structured style profile, and applies that profile as a binding rule on every generation. The voice instruction never lives in the prompt layer where the model’s post-training defaults can override it.

Here’s the practical workflow. You feed the tool 5,000 to 50,000 words of your existing prose (chapters from a previous book, blog posts, essays, anything in your established voice). The tool analyses the prose across multiple stylometric axes: sentence length distribution, function word frequency, contraction rate, first-person pronoun density, paragraph rhythm, dialogue register, structural tendencies. It compiles those patterns into a structured style profile.

When you start drafting, the style profile becomes a binding constraint applied to every generation, not a prompt instruction. The model can’t drift away from your voice across a chapter the way it does in prompt-based tools, because there’s no point in the architecture where the prompt is the only thing holding the voice in place.

This matters at scale because writing is at scale. A short story might be 5,000 words. A novel is 80,000 to 100,000. The drift in prompt-based tools that’s barely visible in a 500-word output becomes catastrophic over a 100,000-word draft. The constraint-based architecture is the only one that holds the voice from chapter one to chapter forty.

bookmoth was built around this exact distinction. The Berkeley paper is the peer-reviewed version of the architecture we’ve been working on for the last year. If you’ve been waiting for empirical evidence that the prompt-based approach can’t get there, the Berkeley study is it. If you’ve tried Sudowrite, NovelCrafter, or base Claude and felt the drift compound across a long-form project, the architecture is the answer.